The so called industrial revolution, a period between 1760 and 1840, marked the transition of economies to new, more industrial manufacturing processes based on machines, factories and new energy sources such as coal, the steam engine, electricity, and petroleum, in Europe and the United States. During the 200 years or so post-industrial revolution, as a result of burning of fossil fuels and land use change, the concentration of carbon dioxide (CO2) in the atmosphere has increased considerably, from 280 parts per million (ppm) to close to 400 ppm, and is predicted to reach 500 ppm by 2050 and 800 ppm or more by the end of the century. Scientists calculate that the ocean is currently absorbing about one quarter of the CO2 that humans are emitting - this may sound like great news for us air-breathing bipedal animals, but it is actually bad news for the planet, including us.

Consequences of Ocean Acidification

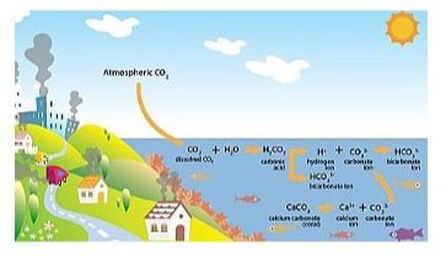

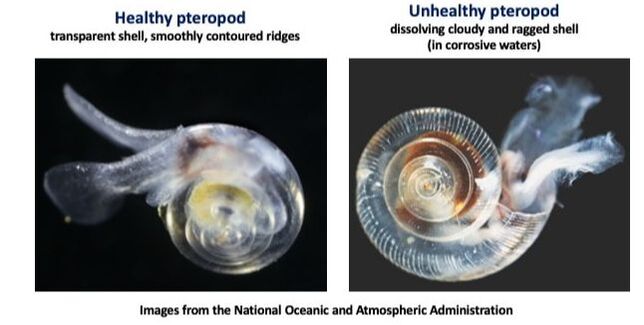

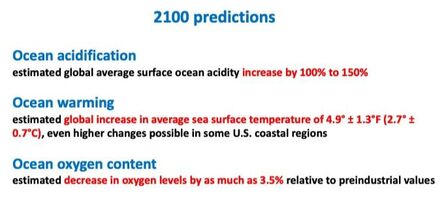

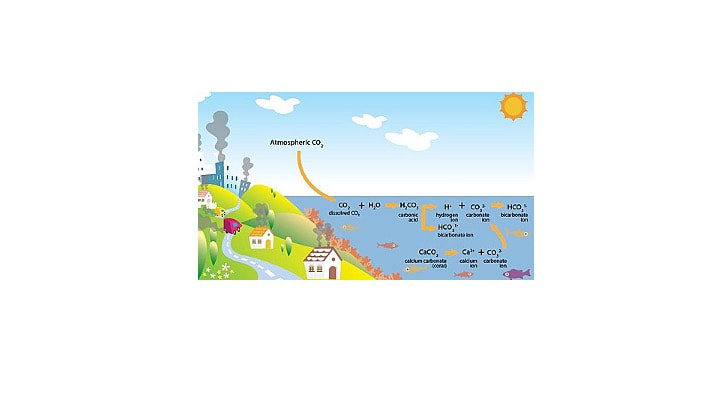

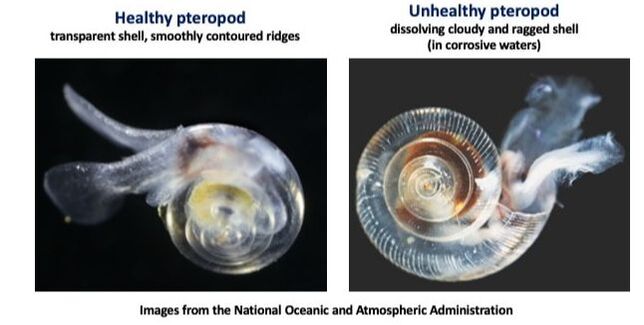

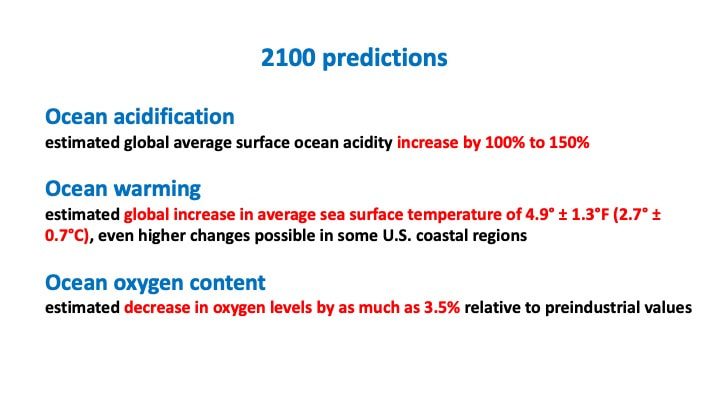

Chemically speaking, absorption of CO2 by seawater causes an increase in carbonic acid, which dissociates and releases hydrogen ions, the concentration of which increases leading to decreased pH and more acidic waters, with a resulting reduction in carbonate ion concentration as these associate with the excess hydrogen ions. The pH of the ocean surface in the past 200 years has fallen by 0.1 pH units (from 8.2 to 8.1), which does not sound like much, but translates into a 30% increase in acidity, and it is estimated that if the current emissions trend continue, by the end of this century it could go down by an additional 0.3 units or 120%.

Unfortunately, ocean acidification is not the whole story. Our oceans are also getting warmer and their oxygen concentration is going down (deoxygenation) - an approximate 2% reduction since 1960. Lower oxygen content happens for two reasons: 1) global (and therefore ocean) warming by about 0.55°C since the 1970s, as cool water con hold more oxygen than warm water; 2) excess of nutrients dumped into the coastal waters coming from our waste, agriculture and fossil fuel burning, leading to an overgrowth of phytoplankton (microalgae) that then decays and uses up a lot of oxygen.

A research study published in the journal Nature in 2019 showed that marine species are more vulnerable than land species to warming, they are more likely to live at dangerously high temperatures, and are disappearing from their habitats due to warming temperatures twice as often as land species. Land animals can find refuge from the heat by moving to forests, shaded areas or underground habitats, while marine animals can not.

Massachussetts, a state with rapidly acidifying coastal waters, is the second highest seafood industry employer. Earlier this year, a “report on the ocean acidification in Massachussetts” was published, recommending strategies to reduce nutrient pollution, restore coastal wetlands, and improve coastal monitoring, such as planting more marine algae/kelp (to increase absorption of CO2 and reduce acidification), and spreading waste shells near oyster beds (to raise carbonate concentration in the water). Coastal water acidification, as opposed to ocean acidification, is a more localized process due to nutrients entering the water from adjacent land, bacteria involved in decomposition and algal blooms. The US Environmental Protection Agency (EPA) issued guidelines in 2018 with guidelines for measuring acidification changes in coastal waters.

What can we, regular citizens, do? It all goes back to carbon emissions, we have to reduce our contribution or “carbon footprint” by using less energy at home and when traveling, follow the three Rs (reduce, reuse, recycle), and limit plastic and pesticides use.

RSS Feed

RSS Feed